And no one would be able to decode the nonsense.ĪSCII wasn't fit for real life use.

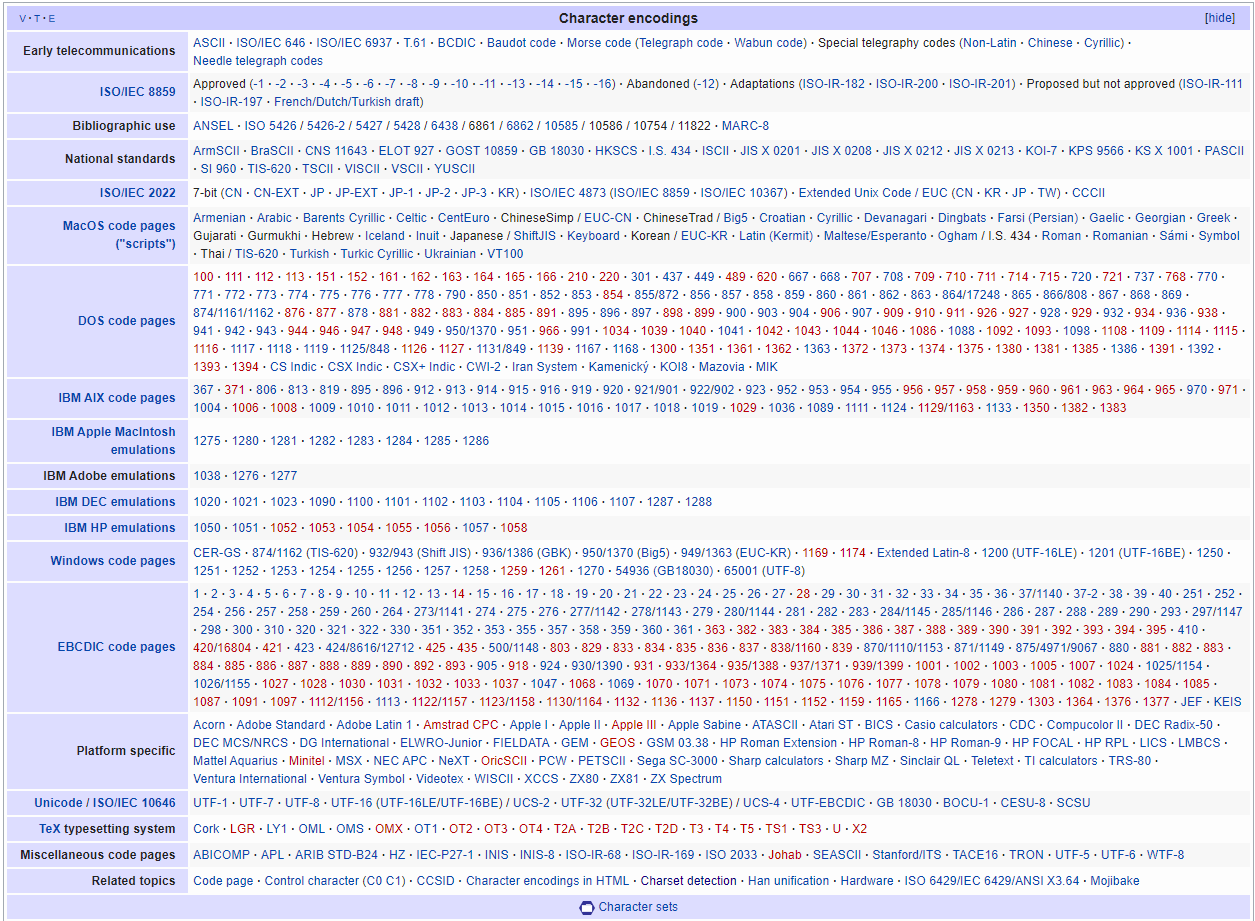

If the Greek computers only accepted Greek and the English computers only sent English.? You would just be shouting into an empty cave. Imagine if all these countries decided what they each thought the standards should be. The internet is just a huge connection of computers around the world. This sounds a little insane.Įxactly! We will have zero chance of reliably interchanging data. Example of completely garbled text (mojibake). It means character transformation in Japanese. It's garbled text you may sometimes see from decoding text, but using the wrong decoding. This problem even has its own term: Mojibake. We don't even have enough spare characters for Chinese, let alone agreeing that the final characters should be Chinese ones. So how on EARTH were we ever going to standardise this? Or make it work between differing languages? Between the same language with different codepages? In a non English language?Ĭhinese has over 100,000 different characters. Greek and Chinese both have multiple codepages, for example. Here's a collection of over 465 different codepages! You can see that there were multiple codepages EVEN for the same language.

Character encoding list code#

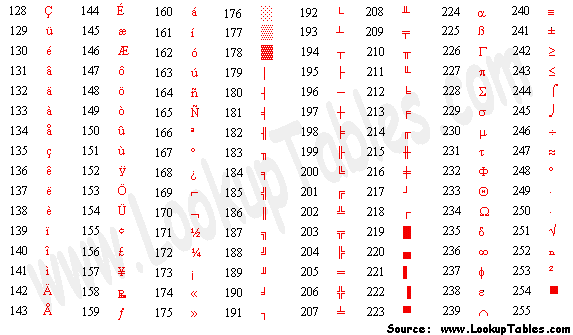

These different endings for ASCII were called code pages. And everyone wanted to implement their own encodings for the end of ASCII. Some squiggles, some background icons, math operators and some accented characters like é.īut other computers didn't all follow this. The problem was with the spare ones.īelow is what the first IBM computers did with the 128-255 encodings for ASCII. No one was debating what 0-127 in the ASCII encoding was. They decided what everyone was doing with 0-127, which is what ASCII was already doing. The American National Standards Institute ( ANSI - don't get confused with ASCII) is a standards body for establishing standards across lots of different fields. But everyone had different ideas about what those final characters should be. People began to think about what would be best to fill those remaining characters. The spare characters were 127 through to 255. ASCII Character TableĬan you see how it ends with 127? We have some spare room at the end. Remember the first 32 are unprintable control characters. All lowercase and uppercase A-Z and 0-9 were encoded to binary numbers. Let's look at an ASCII table here to see every character.

So we had 128 encodings that were unused. We could store all our control characters, all our numbers, all the English characters and have some left! Because one byte can encode 255 characters, and ASCII only needed 127 characters. Some of these control characters were used for instruments called teleprinters, so at the time they were useful (not so much now!)īut the control characters were things like 7 ( 111 in binary) that would make a bell sound on your PC, 8 ( 1000 in binary) that would print over the last character it just printed, or 12 ( 1100 in binary) that would clear a video terminal from all the text just written.Ĭomputers at this time were using 8 bits for one byte (they didn't always), so there were no issues. One byte (eight bits) was large enough to fit every English character, and some control characters too. With ASCII it can translate that into "Hello world". We didn't need to worry about any other characters and the American Standard Code for Information Interchange ( ASCII) was the character encoding that fit this purpose.ĪSCII is a mapping, from binary to alphanumeric characters. In the early days of the internet, it was English only. If all the text you're reading was once binary too, then how do we turn binary into text? Let's look at what we used to do back in the beginning. So 8 0's or 1's make up one byte.Įverything eventually ends up as binary – programming languages, mouse moves, typing, and all the words on the screen.

One digit is called a bit, and a byte is 8 bits. Binary is the language of computers, and is made up of 0's and 1's. Introduction to EncodingĪ computer only can understand binary.

I'll also cover some Computer Science theory you need to understand. I'll explain a brief history of encoding in this article (and I'll discuss how little standardisation there was) and then I'll talk about what we use now. Or even if you're just curious how words end up on your screen – yep, that's encoding, too. If you are coding an international app that uses multiple languages, you'll need to know about encoding.